Automated Vehicles Update: California DMV Releases Draft Rules and Some Notes about that Crash Study

CALIFORNIA DMV AV OPPS RULES PROPOSED: On December 16, the California Department of Motor Vehicles released its draft licensing and operations rules for consumer automated vehicles, or “autonomous vehicles” as defined in California statute (CA Veh Code § 38750). The draft rules were supposed to have been released in August 2014, so California DMV is more than a year late. California DMV summarizes the rules here. AV consultant Brad Templeton provides a critical summary of the provisions here. A few provisions worth noting:

- The proposed definition of “operator” (which would be codified at 13 CCR § 227.02(p)) “is the person who possesses the proper class of license for the type of vehicle being operated, has direct control over the operation of an autonomous vehicle, and has engaged the autonomous technology while sitting in the driver seat of the vehicle.”

- The operator must not only have a driver license; she must also have a special “certificate issued by the department to permit the operation of autonomous vehicles” (§ 227.84(a)) “after completing a behind the wheel training program” (§ 227.84(b)) that “shall include a demonstration of the proper operation of the autonomous technology, including but not limited to: how to engage and disengage the autonomous mode, how to override unauthorized or spurious commands received by the autonomous technology in the event of a cyber-attack, and the operator’s responsibility to monitor the safe operation of the vehicle at all times.” (§ 227.84(b)(1))

- Even worse, “[t]he operator shall be responsible for monitoring the safe operation of the vehicle at all times and be capable of taking over immediate control of the vehicle in the event of an autonomous technology failure or other emergency.” (§ 227.84(c))

What does this all mean? It means that a licensed driver must be in the driver seat at all times, must complete an additional certification process, that fully automated vehicles lacking traditional steering, throttling, and braking controls are prohibited, and that self-driving taxis capable of operating with no one in the vehicle are doubly prohibited.

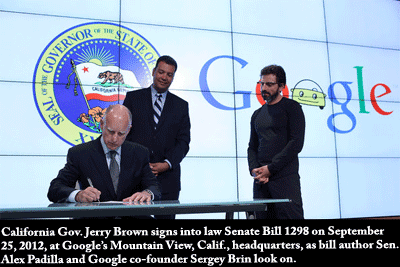

This is bad, bad news, but not surprising. Indeed, we at CEI warned California about these very issues back in 2012, months before the law behind these regulations was enacted. Unfortunately, the bill sponsor, then-Sen. and now Secretary of State Alex Padilla (D) failed to appreciate how his ostensibly pro-technology bill would negatively impact AV technology development and deployment. Keep in mind that Google was also supportive of this bill at the time, hosting Gov. Jerry Brown (D) for the signing ceremony at Google’s Mountain View headquarters with Google co-founder Sergey Brin looking on (left).

This is bad, bad news, but not surprising. Indeed, we at CEI warned California about these very issues back in 2012, months before the law behind these regulations was enacted. Unfortunately, the bill sponsor, then-Sen. and now Secretary of State Alex Padilla (D) failed to appreciate how his ostensibly pro-technology bill would negatively impact AV technology development and deployment. Keep in mind that Google was also supportive of this bill at the time, hosting Gov. Jerry Brown (D) for the signing ceremony at Google’s Mountain View headquarters with Google co-founder Sergey Brin looking on (left).

Thankfully, Google has now done a 180 on bad state laws, but the damage has already been done in California. And it is not—at least not mostly—the California DMV’s fault. For instance, before DMV can contemplate a regulation that would allow AVs to operate without a licensed and AV-certified driver inside during operation, the legislature required that a special public hearing be held (CA Veh Code § 38750(d)(4)). California legislators should fix their garbage AV statute (CA Veh Code § 38750) if they want their state to continue being a leader in this technology. If not, Texas will happily accept their Silicon Valley refugees.

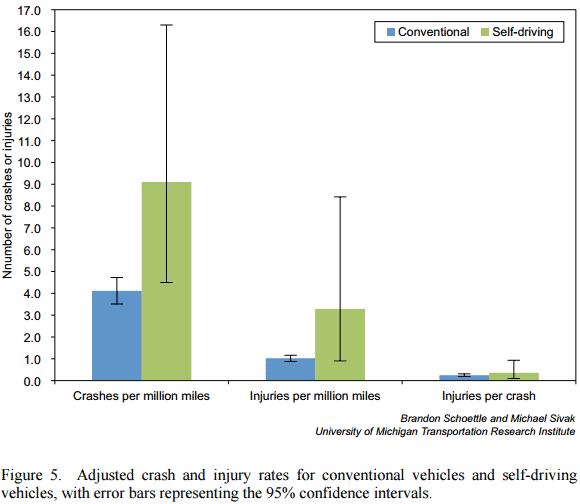

ABOUT THAT AV CRASH STUDY: Back in October, Brandon Schoettle and Michael Sivak of the University of Michigan’s Transportation Research Institute released a report, “A Preliminary Analysis of Real-World Crashes Involving Self-Driving Vehicles.” It got a lot of press for finding that self-driving cars are more likely to be involved in crashes than conventionally driven vehicles. What got less press was the authors’ admission, which was included in the abstract, that “the corresponding 95% confidence intervals overlap. Therefore, we currently cannot rule out, with a reasonable level of confidence, the possibility that the actual rates for self-driving vehicles are lower than for conventional vehicles.”

While the abstract is publicly available, the report is not, so I suspect that nearly all press reports and 100 percent of the commentary relying on the press reports is based on not actually reading the underlying paper. But I have actually read the paper. For AVs, the authors compare 1.2 million publicly reported test miles (i.e., from public statements, press reports—not internal data) driven on public roads—essentially all from Google in and around Mountain View, Calif.—with the nearly 3 trillion miles driven annually in the U.S. To Schoettle and Sivak’s credit, they also adjust the National Highway Traffic Safety Administration’s crash statistics upward in an attempt to account for underreporting of property-only and non-fatal injury crashes. Given the huge disparity between AV and conventionally driven mileage and crashes, comparisons are hard. The error bars are quite… interesting. Here is what they look like:

The upshot is that given the scant data, and low data quality, it is unwise to draw any conclusions from this report. Is it possible inattentive drivers failing to recognize Google’s super-legal AV driving or rubberneckers gawking at the novel technology mean AVs might be more susceptible to crashes in the present? Sure. But again, as the authors note, the 95% confidence intervals overlap. And as I showed you with the error bars, there is a massive amount of uncertainty on the AV data side of things. Schoettle and Sivak’s approach should become more useful as AVs rack up more miles, and accidents, but for now there is little definitive to say about AV safety.